At Linode, our Customer Support team works 24/7/365 to help customers solve problems. We accomplish this with a rolling schedule based on call and ticket volume, with either eight or ten hour shifts starting almost every hour, every day. Our overnight Support Specialists have the option of working fully remote, and everyone else can work a few days every week from home.

In addition to the standard technology everyone is using these days (Slack, Jira, Confluence, Gmail, Github, etc.), we do several non-technology-based things to keep our team in sync every day. We do two Daily Downloads (one during the day, one at night), we spread our leadership team out on the schedule as much as makes sense, and we cultivate a culture of asynchronous, in-the-moment communication.

When we were brainstorming for our 2019 OKRs in late 2018, we asked ourselves what is the most top-of-mind thing our team wanted to improve on. After much discussion, everyone agreed that due to the nature of our working hours, our tools, and our culture, we weren’t engaging in the peer-to-peer feedback that came so much more naturally when we were smaller. We knew from past experience, at Linode and elsewhere, that a culture of great internal feedback - positive and opportunistic - is one of the most important factors in a high-performing team. With this in mind, we set an objective marking 2019 the Year of Feedback - a year to completely revamp, redesign, and revive strong, widespread, and effective internal feedback throughout our Support Team.

Some of our Support Team in our Philadelphia Headquarters’ Training Room.

Some of our Support Team in our Philadelphia Headquarters’ Training Room.

Q1: The Linode Support Feedback Model

To begin this year-long initiative, we had to create a strong foundation - a set of values to exhibit the spirit of feedback at Linode and tangible examples of what those values would look like when put into practice. With this in mind, we set a Q1 Key Result to design, deliver, and train on the new Linode Support Feedback Model, consisting of our Feedback Values and Feedback Guidelines.

We’re thankful that our team has diverse backgrounds and experiences working not only in tech, but across many industries and companies of all shapes and sizes. With our backgrounds came many different experiences with internal feedback mechanisms which we shared and assessed at great length. Our hope was that we’d be able to pick an existing feedback model, devour it deeply, develop training, and hit the ground running. Unfortunately, in discussing our experiences with feedback in our past workplaces, we found good and bad - but mostly bad. The feedback models we used or found under-represented what feedback looks like in real life. They were often tone-deaf to a company’s culture and workplace. Nothing that existed quite fit what we needed, and therefore, we endeavored to build our own.

To start, we listed some universal truths of feedback we believed we needed to incorporate into our model:

- Positive feedback is as important as Opportunistic feedback.

- Feedback should be the same peer-to-peer, manager-to-report, and report-to-manager. Hierarchy and reporting relationships are irrelevant when it comes to feedback.

- Feedback should be delivered face-to-face whenever possible, as soon as possible, and directly - not delegated.

- Feedback should be a two-way conversation, not one person talking at another.

- Opportunistic feedback should assume positive intent.

From these truths, we painstakingly iterated on a set of values, reminiscent of our Core Values, that encompassed the overall tone and feeling of the purpose and importance of feedback:

- We’re all on the same team, we all want the same thing. We always assume positive intent when giving or receiving feedback.

This means we:- Enter the feedback conversations with an open mind and without assumptions about each other or the situation.

- Understand that people have different perceptions and that giving feedback isn’t always easy.

- We understand context and environment matters. We’re mindful of each other’s time and feelings when giving feedback.

This means we:- Prioritize giving feedback sooner rather than later.

- Don’t delegate our feedback to other people.

- Consider the environment we give feedback in.

- Always give feedback face-to-face – feedback is dialogue.

- Eliminate distractions. We focus on each other and what we’re saying and hearing, not our technology.

- Respect other people’s feelings and commit to follow up if the conversation gets emotional.

- We seek to understand both sides of the conversation and take away something actionable.

This means we:- Clearly provide specific and actionable feedback.

- Actively listen to each other.

- Build relationships by engaging in ongoing and iterative feedback.

- We help each other learn and grow. Balancing specific and impactful positive feedback with opportunistic feedback is an essential part of great culture.

These Feedback Values, as they came to be called, were to be the starting point for us to kick off this initiative. They’d provide the foundation that drove not only how we approach a feedback conversation, but our culture as a whole - to solidify the why behind this initiative. Now that we had the why, we needed the how - enter our Feedback Guidelines:

- Agree that the time is right.

- Communicate what was observed and Exchange perspectives.

- If warranted, Discuss and come to an understanding.

- Wrap-up the conversation.

These values also include examples of how to exhibit this behaviors, but I’ve left them out for brevity.

With our Feedback Values and Feedback Guidelines (collectively the “Feedback Model”), we only had to have our Training Team develop a training module for our new hires and run a continuing education course for our existing Support team. After running our entire team through training in Q1, we set out to start observing results in Q2.

The Support area in our Philadelphia Headquarters, where many of our feedback conversations take place.

The Support area in our Philadelphia Headquarters, where many of our feedback conversations take place.

Q2: DISC Assessments

In Q2, with our Feedback Model developed and our team trained, our next mission was to get people using it. During our 1-on-1’s, we were getting feedback that while the model was useful and well-received, it hadn’t yet been utilized by everyone. Digging deeper, what we observed is that sometimes we didn’t know how to approach starting the conversation - in a large team, not everyone knows everyone yet, not everyone has worked together or has built rapport with one another, and it can be tough for a feedback conversation to be your first ever conversation with a team member. Our model didn’t account for that, and we needed something to help us start conversations. Enter DISC.

DISC is a behavior assessment similar to PI or Myers-Briggs. It’s designed to communicate how to approach a conversation with a person that will be best received based on their personality. A group of Trainers and Support Leaders were trained on administrating the DISC assessment and we began integrating our DISC profiles into our feedback process, first by running every Support Specialist through DISC training, both in a group setting and individually by our DISC administrators. We modified our training and integrated it into our new-hire curriculum.

DISC training helped us learn how to approach a conversation with a peer to be most approachable and conversational, and therefore effective. Most of us have found DISC to be a helpful tool in helping us communicate with one another. One issue we’ve encountered, though, is remembering to actually use someone’s DISC profile when approaching a conversation. To start tackling this, we’ve created a section in our Slack profiles for our DISC profiles where everyone shares their DISC type, but we’re still exploring better ways to reinforce this behavior.

Q3: Quality Control

With a strong Feedback Model in place, training completed, and DISC assessments performed, in Q3, it occurred to us that our work on the Year of Feedback so far had enabled us to kick off a formal Quality Assurance program. While we’d done “Quality Assurance” informally for years - Customer Support Managers and Senior Customer Support specialists reviewing tickets and providing feedback as objectively as possible, sporadically and informally - our Feedback Model could provide the strong foundation needed to formalize a program of objective and rigorous Quality Assurance to ensure consistant, accurate, and timely support to our customers.

Creating a QA program begins with creating a rubric - an objective, exhaustive, and fair set of rules and standards. While the full story of our Quality Assurance team could be an entire post in itself, to date, our QA team consists of two part-time Quality Assurance Experts who have graded and provided feedback on 1,171 tickets via an objective and exhaustive rubric. This team is only in its infancy, and we have a lot of plans to grow the amount of experts we have, as well as introducing peer-to-peer ticket grading, phone calls grading, and additional strategies to be introduced.

Q4: Review, Take-aways, and Lessons Learned

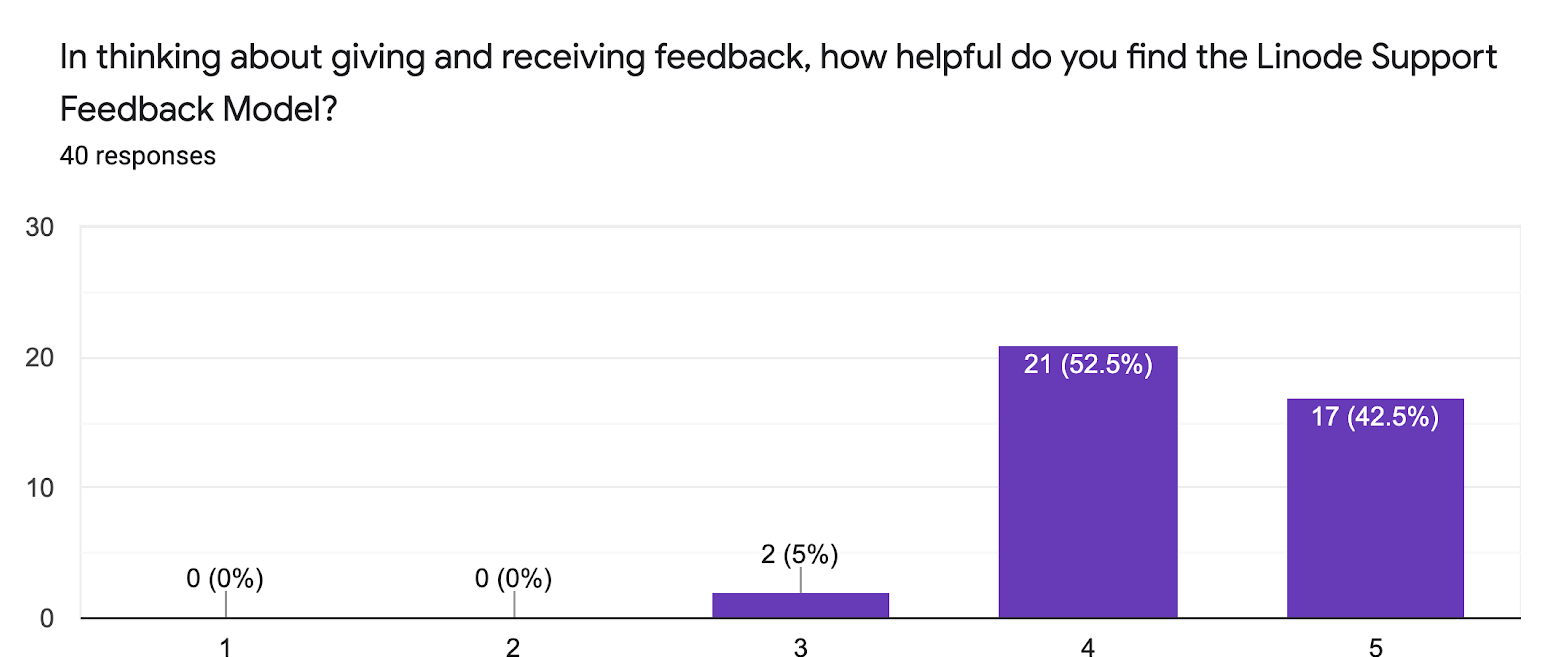

By Q4, we felt that our Feedback Model had started to be truly effective. We were observing more open dialogue, and even though a large portion of feedback is private, in our 1-on-1’s, we were hearing that the Feedback Model and Values were gaining traction. We felt our team was more open and comfortable with giving and receiving feedback and the attitude had shifted from feedback being scary to being an opportunity to for us to grow every day. To test our observations, we wanted to solicit feedback via a survey from the team on how they thought it was going. The survey being optional, a little less than half the team responded. The first question to share asked about the general helpfulness of the Linode Support Feedback Model:

Of those who responded, all indicated that they found the Linode Support Feedback Model at least moderately helpful (3 out of 5). The majority of those who responded ( 95%) indicated they found it quite helpful (4 out of 5 or better).

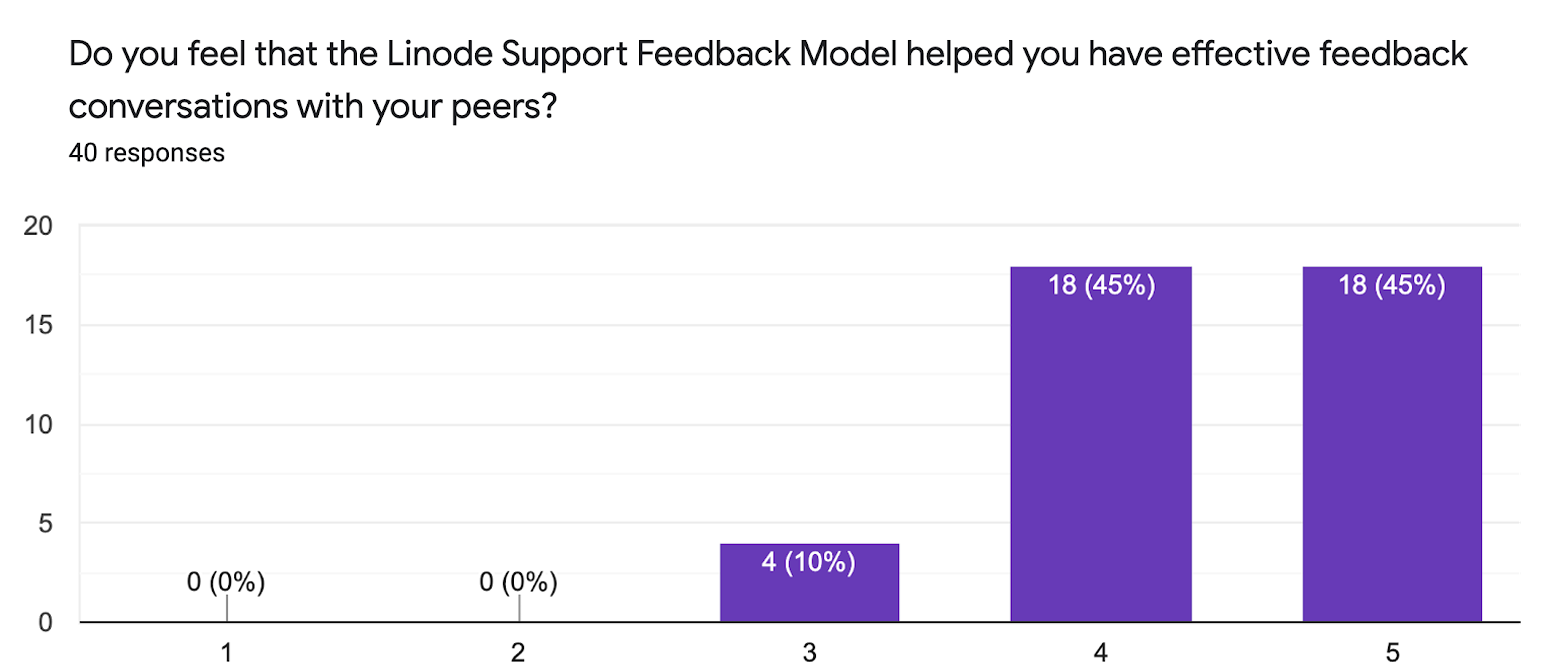

Of those who responded, 90% felt that the Linode Support Feedback Model helped them at a rating of 4 out of 5 or better. All respondents indicated that they found it at least a 3 out of 5 or better.

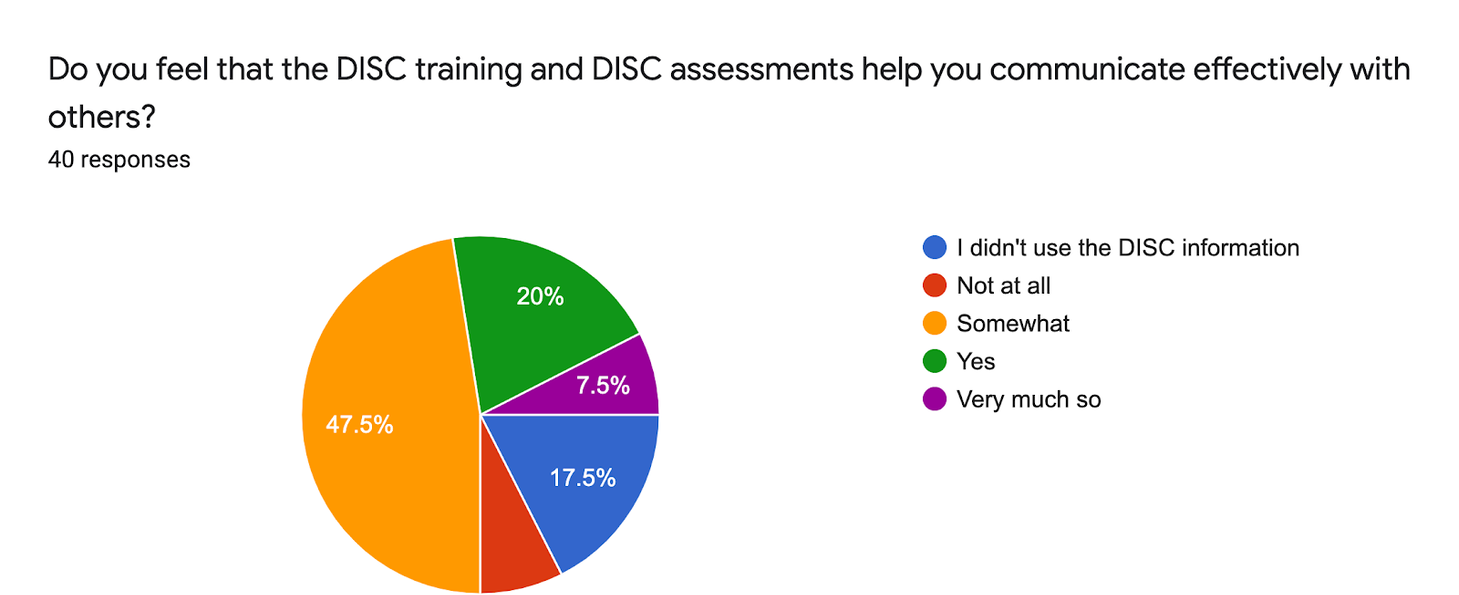

Of the respondents, 75% indicated that they found the DISC training and assessments at least somewhat helpful in having effective feedback conversations with others. A smaller percentage (25%) indicated they did not find it helpful or did not use it.

Overall, we consider our Year of Feedback initiative to be a success. As stated, we’re still observing an uptick in feedback conversations and open dialogue. Since the year has ended, we’ve integrated our Feedback Model into our Core Values:

“We value remarkable, innovative, and compassionate individuals who have profound impacts on our team. We use open dialogue and the Support Feedback Model to empower each other to seek every opportunity to improve ourselves, our team, our processes, and our company. We come to work every day committed to being better than we were the day before.”

Lastly, to top off the entire initiative, we recently won a Silver Stevie Award for Customer Service Training or Coaching Program of the Year in Technology Industries for our Year of Feedback initiative, Feedback Guidelines and Feedback Values. This award was great recognition of our work for the year and more motivation to continue driving feedback home here and elsewhere.

I’d love to hear anyone’s thoughts, opinions, or questions on creating or having a culture of great internal feedback, and I would be happy to help if this is something you’re trying to take on. If you’d like to chat or would like more detail, please reach out on Twitter or send me an email.